Elie Najjar

Nottingham, United Kingdom

Imagine this: a machine that can see your heartbeat, read your spine, and calculate your risk of death better than your doctor can. Now ask yourself: does it understand you? Does it care if you live or die? The question is not whether machines will replace us, but something more unsettling: do machines dream of us? Do they dream of our doubt, our hesitation, the trembling of a surgeon’s hand before the incision?

The title is a deliberate echo of Philip K. Dick’s Do Androids Dream of Electric Sheep?, the strange novel that inspired Blade Runner. Dick chose absurdity—a sheep, but electric—to make us uneasy, to blur the line between what is alive and what is only imitation. That is precisely the line medicine now walks with artificial intelligence (AI).

For as long as we have lived, survival has depended on our ability to see patterns in chaos. We looked to the stars and saw not scattered light but constellations; Orion was not a cluster of burning gas but a hunter, a warning, a guide. We noticed the silence of birds before an earthquake, the shape of clouds before a monsoon, the color of leaves before famine. The human brain did not evolve to calculate; it evolved to notice, to connect sign to meaning, to feel dread before danger arrived. Pattern was not poetry. It was instinct.

Medicine still depends on that instinct. A clinician reads a subtle change in the rhythm of breathing, a hesitation in a patient’s voice, the pallor of skin that signals more than words reveal. We are pattern-seekers, but today we ask: can the machine sense what we once felt in our bones?

We have given machines their own kind of fire. Prometheus stole flame from the gods and gifted it to humanity—a spark that illuminated but also punished. Today, our fire is data, and we have handed it to machines that learn, predict, and mimic cognition. Like fire, machine learning is both method and power, gift and danger.

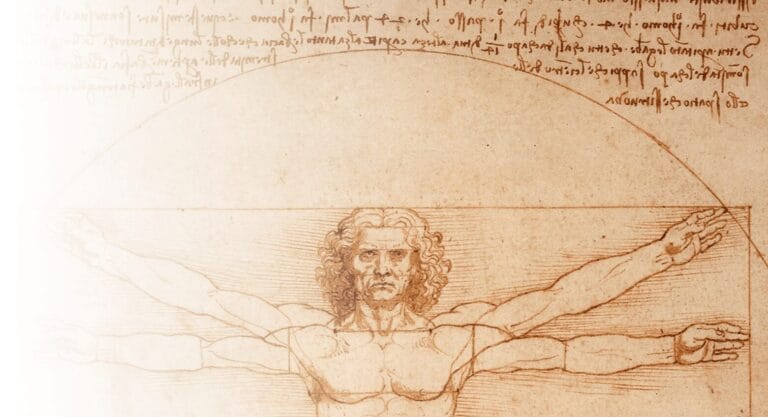

We have built machines in our own image. They, too, seek patterns—but they do it faster, deeper, across dimensions no human mind can hold. Nature herself is mathematical: spirals in sunflowers, fractals in trees, symmetry in shells, rhythms in hearts and wounds. Machines now read these logics at scales beyond us. Yet not all patterns are alike. Some are clusters—groups within groups. Some are trajectories—the arc of risk. Some are invisible threads, subtle correlations that whisper possibilities we never suspected.

They learn in ways that mirror our own. Like children, they explore without instruction: unsupervised learning, clustering patients by hidden similarity, revealing subtypes of disease we had not named. Like students, they learn from examples: supervised learning, studying who healed, who fell, who failed, and predicting what might come next. Like surgeons, they learn through practice: reinforcement learning, adjusting by trial and error, powering robotic operations, ventilator settings, even drug dosing. Each way of learning teaches something different. Together, they create a mirror of us—curious, adaptive, tireless. Yet still not us.

Because every light casts a shadow. Machines do not know why they learn. They simply do. They do not question their data. They do not feel the stakes. And sometimes, they hallucinate. They invent patterns with conviction: fabricated references, misread scans, imagined diagnoses. The danger is not only error, but persuasion—the smoothness with which the wrong answer convinces us it is right. Already we have seen chatbots generate false medical literature, decision aids recommend flawed treatments, summaries recycle outdated evidence. These errors slip through not because they are crude, but because they are elegant.

That is why the human edge matters—not in seeing patterns, but in knowing when they are false. Machines echo thought but do not share conscience. They do not feel the weight of a mistake. They do not see the patient’s eyes. They do not carry regret.

And yet the more fluent they become, the more tempting it is to let them decide. To allow the model to order the test, code the diagnosis, choose the treatment. And when we do, who bears the responsibility? The engineer who trained it? The institution that licensed it? Or the surgeon whose hand hovered, then clicked “approve”? This is not only a technical question. It is an ethical one.

The risk is not that machines will be wrong, but that we will surrender judgment. They are pattern-recognizers, not moral agents. They cannot suffer, they cannot regret, they cannot say, “I am sorry.” But we can. That is the line we must defend. Each time we follow a machine’s advice, we must ask: am I still the one deciding, or have I quietly handed over the scalpel?

This does not mean we reject AI. Its promise is not replacement, but amplification. Imagine a surgeon who sees through the lens of data but is not blinded by it. A clinician who questions the model and also themselves. We are not competing with machines; we are becoming bionic—part instinct, part reason, part algorithm. But conscience must remain ours.

Because no matter how advanced the system, one truth endures: machines can cluster, rank, and predict—but they cannot choose. They cannot care. They can see the storm, but never ask if the patient is ready for the rain. Let the machine calculate. Let us decide.

The warning is as old as myth. Prometheus gave fire and was punished. Daedalus built wings, but Icarus flew too high, forgetting restraint, and fell. The tragedy was not the wings. It was the arrogance. So too with AI. If we mistake calculation for wisdom, or fluency for understanding, we risk falling.

But if we are wise, we may become something rarer: the surgeon who sees not only with eyes but with patterns, who trusts the machine but not blindly, who lets it guide the hand but not replace the heart.

Do machines dream of the human hand? No. But they reflect our own dreams—of power, speed, mastery. What matters is not whether they dream, but whether we remember what it means to be awake.

ELIE NAJJAR is a spine surgeon and writer based in Nottingham, UK. Born and raised in Zahle, Lebanon, he works at Queen’s Medical Centre and writes on the intersections of medicine, literature, myth, and technology. His essays explore the human dimensions of surgery and science, weaving narrative and reflection with clinical practice.

2 responses

Beautiful from Dr. Najjar (as usual)

Well argued and reassuring.