Philip Liebson

Chicago, Illinois, United States

|

|

We shall begin by first citing a distinction frequently made between two types of physical theory. One type starts from a series of equations with the purpose of describing phenomena. It is called a phenomenological or mathematical descriptive theory. In the second type of theory physical facts are described in mathematical relationships and also explained; and in the past four centuries explanation has been frequently given by a methodological device called a “mechanical model”. The phenomenon of light, for example, has been described by a mechanical model of the “ether” through which the light waves are propagated and by a mechanical model of the atom or particle that emits the light. These mechanical models are based on two factors: (1) laws of mechanics and physical phenomena expounded in the seventeenth and eighteenth centuries, especially Newton’s laws of force and motion, and (2) the factor called the “common sense”, “intuitive”, or “empirical” basis for the models. The second type of theory is called a causal theory insofar as not only description but cause is given. Some theorists, especially those before the twentieth century, believed that the phenomenological theory is only helpful in recording facts already known, whereas the causal theory is helpful in discovering new facts.

The use of mechanical models has led to considerable difficulty in describing such phenomena as the propagation of light, however. There is from this the question of the validity of mechanical models. In the early nineteenth century, when scientists were convinced by the work of Young and Fresnel that light was undulatory in nature and that there must be some medium through which the waves were propagated. This medium, moreover, had to comply with the factors mentioned above, namely the laws of matter and the factor of intuition. When this medium, the ether, was established, the question then arose as to what were the dynamic laws of the ether. The mechanical model could explain the ether to be sufficiently hard enough to penetrate all transparent bodies, even as hard as the diamond. However, it had to be considered also an exceedingly rarified fluid so that the matter in space could not be retarded in its motion by the ether. This is exceedingly paradoxical, of course, and leads to the question of how an extremely fluid medium can be deformed in such a manner as to produce transverse waves of light.

Some tried to deny the possibility of transverse vibrations because of the conflict with the intuitive factor, but it was overlooked by many that the concept of transverse waves were an implication of the facts of polarization and double refraction. An attempt was made to make the ether fit in with this conception and yet also agree with the intuitive sense. This problem has not been solved perhaps because it is based on a contradiction that has prevailed in scientific thought. This contradiction is found in the idea of causality itself. During the early investigation of the propagation of light, it was discovered that light is in the form of a transverse wave that can be seen travelling in a vibrating solid. If the properties of light accompanying the visible transverse vibrations cannot be discovered, however, one can either refuse to believe in the facts, which, in fact, did happen, or attempt to construct a picture or mechanical model, which was found to be filled with inner contradictions. If light can be conceived of being itself a disturbance in the form of a transverse wave without expecting it to be the result of the undulation of something else, the contradictions disappear. The oversight might lie in the fallacy of intuition being essentially “true”, even if it is only apparently true.

In the methodology of science, the phenomenological theory is by no means separated from the causal theory. When these equations are formulated, however, they must be derivable from previous equations – equations of motion, force, etc., as propounded by theoreticians whose work was based on observation and conception of the physical world that formed the basis for mechanical model itself. The important point here is that with a causal theory there is restriction with respect to the notion that the formulae are secondarily derived. Conversely, if a phenomenological theory is propounded, the formulae and equations may not at first be related in any way to previous laws, but once they are subjected to explanation, they are held up to the previous laws and a connection between them is attempted. If this connection is not found, the newly propounded formulae might not fit into the system and mechanical models of the phenomena conceived by the primary law of motion, force, etc. However, they do describe the particular phenomena.

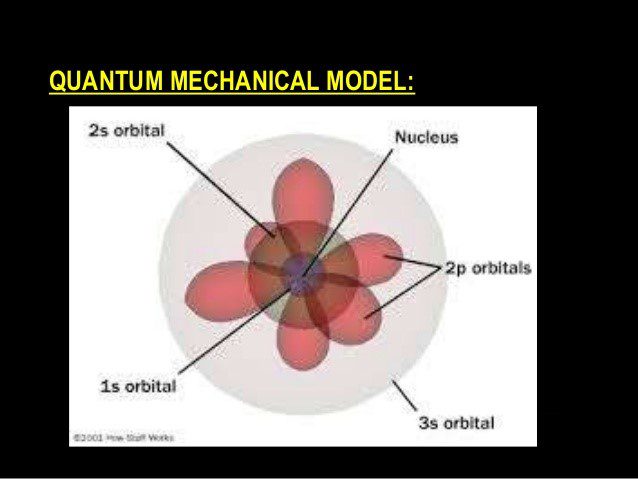

Another difficulty arises when we assume that all physical phenomena should be explained by mechanical models. Certainly the motions of the smallest molecules and particles cannot be covered by the same equations as those which describe the motions of intermediate or large bodies. The quantum theory vs. that of field theory of cosmology have not been reconciled with a composite set of equations or models, perhaps the most perplexing area of physics.

What is more, this is also the case with respect to relative velocities of bodies. No mechanical model can cover these discrepancies. If the phase “causal explanation” means derivable from Newton’s laws of motion, then it must be accepted that the laws of modern are not causal ones, only descriptive ones. Causality in terms of generality is now generally accepted by the scientist. Laws of nature are to be taken in terms of exceptionless repetition and nothing further. It is not out of the question, however, for one to take an isolated condition that only the modern laws describe adequately and “explain” it by means of a particular model, not necessarily mechanical, which is directly applicable to a system of mathematics, not necessarily Euclidean. This model would be hard to conceive of because the second factor, the factor of “intuition”, would not necessarily hold.

Consideration must also be made for statistical phenomena, or occurrences that must be attributed to an aggregate of particles, which are “consistent” because they obey certain laws under similar conditions. The laws do not hold for one or even a few of these particles, only aggregates. Statistical laws were first introduced into physics in the twentieth century with conversion of heat into mechanical work, and vice versa faces great difficulty if applied to the kinetic theory of gases, according to which a gas consists of a great many molecules which swarm in all directions, collide with each other, and describe zigzag paths at enormous speeds. This hypothesis which accounts for a large number of facts connected with the existence of irrecoverable or irreversible changes in the universe.

Directly connected with this is the second law of thermodynamics. This law states that heat energy moves in only one direction, so that the total energy of the system will tend to decrease. According to the law, molecules which were originally assembled in a small corner of an isolated container, if equally distributed throughout the container, will cause an increase in entropy in the system. Since the entropy can only increase according to the law, and therefore the change is irreversible, the gas can never undergo change in density by which eventually the molecules are once more assembled in the corner.

According to the kinetic theory, however, it is conceivable that the gas equally distributed throughout the container can resume its original position in a corner of the container. This can be accomplished by reversing the direction of the velocities of all the particles. According to Newton’s laws of motion, which are in accord with the motion of the individual molecules theoretically, the molecules must reverse their previous orbits until they finally reach the original state. The kinetic theory appears seems to be incompatible with the idea of irreversible or irrecoverable changes of state, and consequently with the second law of thermodynamics. From this it follows that Newton’s mechanics are also incompatible with irreversible changes. Thus, the kinetic theory of heat needs an added means of description besides Newton’s laws of motion.

Statistical hypotheses have now been developed to offset this difficulty. With the use of statistical hypotheses as propounded by Boltzmann, for example, “irreversibility” is only a superficial effect and if the phenomena were to be observed in more detail and over a long period of time, the initial state would once more reappear. The important difference between a statistical hypothesis and a mechanical hypothesis is that the statistical hypothesis does not make an assumption about how a specific particle behaves at a specific time in a given field of force. If a mechanical model were used in this case, it would have to be with the understanding that ideal motions of observable particles are only approximations, and that the approximations theoretically obey Newton’s laws. Once one begins to examine the actual motion of a molecule and the actual motions of the particles that form the molecule, it is seen that Newtonian mechanics cannot compensate for these motions, viz. Heisenberg’s uncertainty principle and the field of quantum mechanics.

|

|

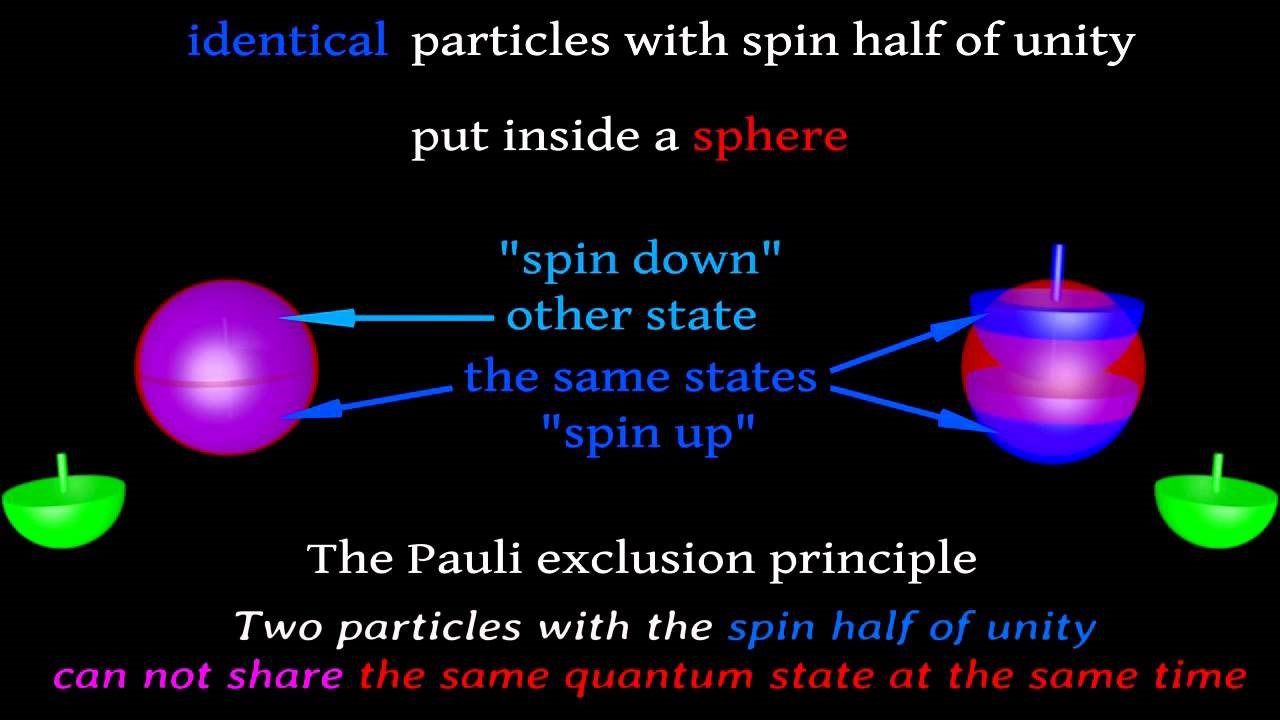

In setting up these statistical hypotheses, their compatibility with Newton’s mechanics has to be assumed as a special hypothesis. This special hypothesis might reconcile individual with aggregate activities of the molecules, among other conditions. If the special hypothesis is rejected and the connection with Newtonian mechanics is thus severed, the conclusions drawn from the kinetic theory are altered substantially. In modern wave mechanics, not only has Newtonian mechanics been replaced, but so has Boltzmann statistics also by other hypotheses. These statistical hypotheses now depend upon the constitution of the particle investigated. Thus we have Bose-Einstein statistics accounting for the influence of particles on the position of a photon, and Fermi statistics accounting for particles on the position of an electron. In quantum statistics, Bose–Einstein is one of two possible ways a group of separate, indistinguishable particles may occupy a set of available discrete energy states at thermodynamic equilibrium. Fermi–Dirac statistics describe a distribution of particles over energy states in systems consisting of many identical particles that obey the Pauli exclusion principle. Fermi–Dirac statistics apply to identical particles, with negligible interaction in a system with thermodynamic equilibrium. Additionally, the particles in this system are assumed to have negligible mutual interaction. A mechanical model would be out of the question here, considering not only the conditions necessary, but also the difficulty of reconciling the occurrences with “common sense” or “intuitiveness” based upon a Newtonian universe.

We have been trying to show here what was stated previously, namely that the statement that the laws of modern physics are not causal ones, only descriptive ones, has a definite meaning only if the phrase “causal explanation” is used in the sense of being derivable from Newton’s laws of motion. This definition of causal explanation could make sense only if a particular logical status was implicated by Newton’s laws. This, however, would tend to discriminate against other foundations of physics. Although we may say that phenomena are explained in some cases by Newtonian mechanics, this does not necessarily make it the only legitimate basis of explanation. In the seventeenth and eighteenth centuries there was the belief that the validity of certain formulae, such as Newton’s, could be established philosophically without reference to the observable evidence. Otherwise the derived formulae from Newton’s equations would not be more of an explanation than derivations from any other set of statements.

In a sense therefore, the causal theory underlying Newton’s laws is differentiated from a descriptive or phenomenological theory by metaphysics. This is demonstrated before Newton with the system of Ptolemy. Francis Bacon criticized the Copernican system of being descriptive while, according to him, the Ptolemaic system provided understanding. The content of a system, if it is constantly in use and is believed in, becomes more and more credited to “common sense”. Bacon condemned Copernicus in regard to the strictly empirical description of nature, not in the name of metaphysics. The models of epicycles show how far this could go.

Now we come to the question of whether causality is an ultimate principle or merely a substitute for statistical regularity. From the investigations of quantum mechanics we find that individual atomic occurrences do not lend themselves to a causal interpretation and are merely controlled by probability laws. This result, formulated in Heisenberg’s principle of indeterminacy, constitutes the proof that the idea of a strict causality is to be abandoned and that the laws of probability take over the place once occupied by the laws of causality. The causal structure of the physical world is then replaced by a probability structure, and the understanding of the physical world presupposes the elaboration of a theory of probability.

However, even with classical mechanics the analysis of causality shows that notions of probability are indispensable. In classical physics the causal laws are idealization, and that the actual occurrences are more complex than can be assumed in the physical descriptions. Even with intermediate and large sized masses that obey Newton’s laws, we must have corrections due to the multiplicity of phenomena that have not yet been observed and recorded. Thus our mechanical models cannot begin to encompass all types of mechanical forces and motions, even though they may fit the scheme of Newton’s laws. The force of gravity, for instance, does not vary ideally but actually does with the height of the body from the Earth. There are many other considerations such as this one that have to be taken into account. The more phenomena that are discovered under Newton’s laws, the more the mechanical model, to be accurate, would have to become increasingly complex, as would be the case with models of epicycles after Ptolemy.

With the idea in mind that even in classical mechanics we have a factor of probability in our model, let us proceed to a more thorough investigation of the differences between Newtonian and wave mechanics to ascertain the relationship between them and the relative practicability of having a mechanical model in the latter case. First of all, the gap between the new wave mechanics of small masses and the Newtonian mechanics must not be exaggerated. With Newtonian mechanics, it was shown that substitution of ideal equations by operational probability factors made the model apparently more accurate. However, this presents the difficulty concerned with sense impressions. What is to be regarded as a single sense datum? How do we know that when we isolate a physical phenomenon and observe it, we are not observing this phenomenon to be taking place many times and only consider it to be taking place only once? Also, what do we consider to be an average of a great many sense data? Finally, our observations in time are limited top what the eye can see and the ear hear, but over microseconds, events phenomena could appear and disappear that would not be perceived by the senses. Here again we see the difficulty of “irreversibility”, wherein an average of data could lead to support of phenomena or fleeting phenomena imperceptible to the senses would not be perceived. Let us take the case, for instance, in a metastable equilibrium where a component A appears to move completely to state B. If the system were left for a thousand years or so, however, there might eventually be in equilibrium reached between AS and B. Thus, physical quantities do set up a partition between statistical theory and strictly causal theory, although both types are found to be indispensable.

How can we ascribe causality to the new wave theory? This would be accomplished by regarding the percentage of particles producing events at a certain region in space as physical reality. Causality would be lost, however, if positions and momenta of the particles instead of the particles themselves were to be recognized as the variables which describe physical reality. This would be analogous to the recognition and ascription of phenomena caused by the emission of light rays to assume the identity of the light rays themselves. The mechanics of Newton does, however, allow a causal prediction of the position and velocity of particles, which are regarded as physical reality. Wave mechanics, on the other hand, does not account for both the position and momentum of a particle at a certain instant in time, so that the anti-causal factor is eliminated. Prediction of position and velocities has already been shown in the case of Newtonian mechanics to be questionable because of the necessity of a statistical theory which allows for arbitrary factors in observing physical quantities.

Wave theory is also broader in its description of the region observed. Whereas Newton’s laws leave out the environmental influences around a particle, the wave theory brings in these influences. These environmental influences, however, are considered separately for position and momentum. In wave theory, a prediction starting from operational statements would have to rest either on operational statements about position or statements about momentum of a particle. With Newtonian mechanics, prediction rests on a wider base of both position and momentum of a particle. Therefore, we see that the causality of Newtonian mechanics cannot be base upon an analogous causality in wave mechanics and vice versa since both start from different variables in regard to the condition of the region being investigated.

Another factor drawn from these considerations concerning the causality of wave mechanics refers to the relation of the type of particle being investigated to its environment. The condition of a particle cannot be described objectively but only when it is in interaction with an instrument of measurement. This instrument is one in which its working can be described in terms of Newtonian mechanics. Einstein for a long time doubted the advisability of dropping the traditional conception of objective physical reality, in which case physical reality is to be ascribed to a material particle without regard to its environment. The principle of complementarity of particle and environment has been considered by some as merely a provisional state of science; later, they believe, the Newtonian concept of physical reality might finally be applied to wave mechanics. However, it must always be kept in mind that the position of a particle can only be applied by describing the instrument by which the position is measured. Reality, then, must be considered in an operational sense in wave mechanics and never in a strictly empirical or metaphysical one.

Therefore, a model to be useful would have to be applied with certain considerations taken into account: (1) The model is one of an operational system, not one which may be considered “physical real”; (2) There is a duplicity of conditions, because two variables must be used separately in describing phenomenal position and momentum; (3) The physical laws pertaining to this model are dependent upon which variables(s) is/are used; (4) The model must always be understood to picture a specific environment, wherein the particles are being interacted with an instrument of measurement. One must also bear in mind the implication that causality is dependent upon the state that is being described, and that Newtonian causality may be considered differently from the causality of wave mechanics in its present state.

Mechanical models, of course, would certainly fit in with continued importance in the operational aspect of Newtonian mechanics. These models would of necessity be different from the models of wave mechanics, and it would be assumed that they are working on a purely operational basis. The models in this regard would not contradict one another and would be useful in explaining the apparent phenomena within their specific realms. We must remember that science is primarily a conceptual scheme and ultimately depends our sense impressions and thoughts.

Sources

- O’Donnell, Peter J. (2015). Essential Dynamics and Relativity. CRC Press. ISBN 978-1-466-58839-4.

- Thornton, Stephen T.; Marion, Jerry B. (2003). Classical Dynamics of Particles and Systems (5th ed.). Brooks Cole. ISBN 0-534-40896-6.

- Feynman, Richard P. The Strange Theory of Light and Matter, Penguin, 1985.

- Haselhurst, Geoff The Metaphysics of Space and Motion and the Wave Structure of Matter, 2000.

- Born, M. (1949). Natural Philosophy of Cause and Chance, Oxford University Press, London.

- Sklar, L. (1995). Determinism, pp. 117–119 in A Companion to Metaphysics, edited by Kim, J. Sosa, E., Blackwell, Oxford UK.

- Mackie, John L. The Cement of the Universe: A study in Causation. Clarendon Press, Oxford, England, 1988.

- Salmon, W. (1984) Scientific Explanation and the Causal Structure of the World. Princeton University Press.

- Dirac, P.A.M. (1973). Development of the physicist’s conception of nature, pp. 1–55 in The Physicist’s Conception of Nature, edited by J. Mehra, D. Reidel, Dordrecht, ISBN 90-277-0345-0, p. 5: “That led Heisenberg to his really masterful step forward, resulting in the new quantum mechanics. His idea was to build up a theory entirely in terms of quantities referring to two states.”

- Cox, Brian; Forshaw, Jeff (2011). The Quantum Universe: Everything That Can Happen Does Happen:. Allen Lane. ISBN 1-84614-432-9.

- Gaukroger, S., 2001, Francis Bacon and the Transformation of Early-Modern Philosophy, Cambridge: Cambridge University Press.

- Stone, A. Douglas (2013). Einstein and the Quantum. Princeton University Press. ISBN 978-0-691-13968-5.

- Sen, D. (2014). “The uncertainty relations in quantum mechanics” (PDF). Current Science 107 (2): 203–218.

- Furuta, Aya (2012), “One Thing Is Certain: Heisenberg’s Uncertainty Principle Is Not Dead”, Scientific American.

- Pierre Duhem (1954) The Aim and Structure of Physical Theory, Princeton University Press.

- Taub, Liba Chia (1993). Ptolemy’s Universe: The Natural Philosophical and Ethical Foundations of Ptolemy’s Astronomy. Chicago: Open Court Press. ISBN 0-8126-9229-2.

- Wightman, WPD. The Growth of Scientific Ideas. New Haven. Yale University Press 1953. Especially Chapters V (The Geometry of the Heavens), VII (The Rise of Terrestrial Mechanics), VIII (The Dawn of Universal Mechanics), X (The Newtonian Revolution).

- Nagel, E. The Causal Character of Modern Physical Theory. pp. 419-435, in, Feigl H and Brodbeck M, Readings in the Philosophy of Science, New York, Appleton-Century-Crofts, 1953.

PHILIP R. LIEBSON, MD, graduated from Columbia University and the State University of New York Downstate Medical Center. He received his cardiology training at Bellevue Hospital, New York and the New York Hospital Cornell Medical Center, where he also served as faculty for several years. A professor of medicine and preventive medicine, he has been on the faculty of Rush Medical College and Rush University Medical Center since 1972 and holds the McMullan-Eybel Chair of Excellence in Clinical Cardiology.

Leave a Reply